Snowflake fabrics

You can now create fabrics that use Snowflake as the SQL warehouse for executing pipelines. Snowflake fabrics allow SQL pipelines to run directly in your Snowflake environment.Snowflake fabrics are available for users who connect Prophecy to their own compute infrastructure. Once a Snowflake fabric is created, projects can run SQL pipelines using Snowflake as the execution engine.Currently, Snowflake fabrics do not support case sensitivity, creating new partitioned tables, or modifying the partitioning of existing tables.

Import Alteryx workflows to BigQuery SQL

You can now migrate Alteryx workflows to BigQuery SQL using a BigQuery fabric. During import, the selected fabric determines the SQL dialect, allowing you to generate pipelines that run natively on BigQuery in addition to Databricks.Rename and duplicate pipelines

You can now rename pipelines directly from the Project Explorer and duplicate existing pipelines from the Studio canvas. Duplicating a pipeline creates a copy of the entire pipeline structure, allowing you to reuse and modify existing workflows more quickly.Export compiled SQL

You can now export the compiled SQL generated from your pipelines. This allows you to review the SQL produced by Prophecy, run it directly in your warehouse environment, or share it with teams working outside Prophecy.New graph-based pipeline format

Pipeline Python files now use a graph-based structure instead of a task-based format. This improves clarity by showing how steps relate to one another, especially in pipelines with branching or multiple dependencies.Databricks job sizes are now configured in fabrics

Databricks job cluster sizes are now configured at the fabric level. This change is part of the new unified fabric model and centralizes cluster configuration for projects that create Databricks jobs.To create Databricks jobs from the Prophecy UI, you must first define one or more job sizes in the fabric settings.Projects created before this change may not support Databricks job creation until job sizes are defined in the associated fabric.This update ensures that cluster configurations are managed centrally and reused consistently across projects.Scala JAR support for Spark 3.x and 4.x

Scala pipeline projects now generate version-specific JARs for Spark 3.x and Spark 4.x runtimes. Prophecy automatically selects the appropriate JAR based on the target cluster runtime.This change ensures compatibility across environments using different Scala versions (2.12 for Spark 3.x and 2.13 for Spark 4.x), eliminating runtime errors caused by binary incompatibility.To support this, pipeline builds now use Maven profiles to produce JARs for each supported Scala version from a single codebase.Huge improvements to the Agent

Private PreviewProphecy Agents have been completely redesigned to be more powerful, accurate, and delightful to work with. Try using the upgraded Agent to transform, document, and harmonize your data.The Agent will now describe how it’s thinking as it works through your request, making the process transparent and easier to debug. This additional thinking also helps the Agent make better decisions and produce more accurate results. Because of this, you can prompt the Agent with more complex requests and decrease the time to results during development.Analysis dashboards

You can now quickly gain insight about your data by asking the Agent to create data analyses for you. These analyses are available and customizable using dashboards, which you can share with your team or deploy on a schedule. See the analysis overview for more information.Analysis dashboards were built on Prophecy Apps, which are now deprecated.

Visual container enhancements

Visual containers let you organize related gems in your pipeline for easier navigation and understanding. We now support nesting containers within other containers.Parameter sets

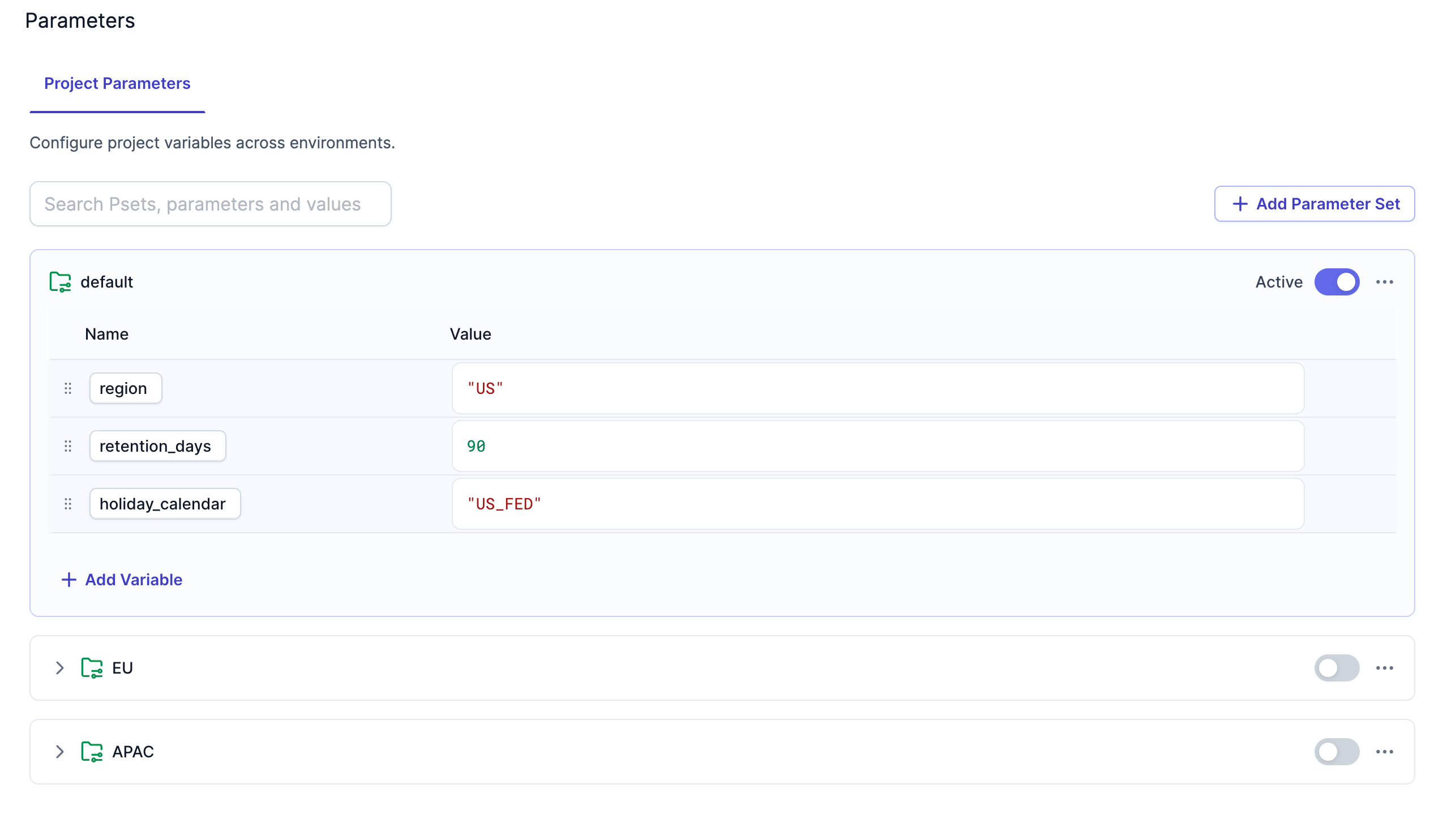

You can now create parameter sets to assign values to variables for different environments or deployment scenarios. Parameters can be global (project-level) or local (pipeline-level). You’ll be able to select the appropriate parameter set during project deployment or app runs.

Source and target file enhancements

We’ve made several enhancements to the source and target gems for file storage systems:- Compression: Source gems can now read compressed files and target gems can now write compressed files in the following formats:

gzip,zstd,lz4,zlib,snappy, andlzop. - Examine: Gems now have an Examine button in the Location tab. When you click the Examine button, Prophecy reads a small sample of the file to automatically determine the file format, compression type (if any), and schema.

- Encryption: Target gems can now write encrypted files using the following encryption methods:

AES-192,AES-296, andBlowFish. Source gems cannot read encrypted files.

PySpark for data analysis projects

Private PreviewProphecy now offers Simplified PySpark for data analysis. This project type abstracts PySpark into a simple, data analysis interface. You can switch between SQL and PySpark project types in the development settings of a project.We’ve also added support for unit tests for PySpark projects. In future releases, we will support unit tests for SQL projects as well.SAP HANA target gem

The SAP HANA target gem now supports Delete and Insert and SCD2 write modes.ProphecySparkBasicsPython v0.2.54

This new version of the ProphecySparkBasicsPython package includes the following enhancements:- Added support for bulk updating target columns during SCD1 Delta merge. This way, you don’t have to write individual update expressions for each column.

- Added support for lateral column aliases in Reformat and Join gems. Lateral column aliasing in PySpark allows you to reuse a column alias defined earlier in the same clause.

Prophecy Library versions

- ProphecyLibsPython 2.1.9

- ProphecyLibsScala 8.16.0